[ For more information on the Eideticker software I’m referring to, see this entry ]

tl;dr: You can now run the standard eideticker benchmarks easily on any Android phone without any kind of specialized hardware.

So Eideticker is pretty great at comparing relative performance between different browsers and generally measuring things in an absolutely neutral way. Unfortunately it’s a bit of a pain to use it at the moment to catch regressions: the software still has a few bugs and encoding/decoding/analyzing the capture still takes a great deal of time. Not to mention the fact that it currently requires specialized hardware (though that will soon be less of a concern at least inside MoCo, where we have a bunch of Eideticker boxes on order for the Toronto and Mountain View offices).

A few months ago, Chris Lord wrote up some great code to internally measure the amount of checkerboarding going on in Fennec. I’ve thought for a while that it would be a neat idea to hook this up to the Eideticker harness, and today I finally did so. After installing Eideticker, you can now run the benchmark on any machine against an arbitrary fennec build just by typing this from the eideticker root directory:

adb shell setprop log.tag.GeckoLayerRendererProf DEBUG

./bin/get-metric-for-build.py --no-capture --get-internal-checkerboard-stats --num-runs 3 nightly.apk src/tests/scrolling/taskjs.org/index.html

In return, you’ll get some nice clean results like this:

=== Internal Checkerboard Stats (sum of percents, not percentage) ===

[167.34348, 171.871015, 175.3420296]

Just to be sure that the results were comparable, I did a quick set of runs on the Eideticker machine in Mountain View with both internal checkerboard statistics gathering and HDMI capturing enabled.

| Stats file |

HDMI capturing |

| 167.34348 |

177.022 |

| 171.87 |

184.46 |

| 175.34 |

184.44 |

While the results aren’t identical (we measure number of frames differently inside Fennec than we do with Eideticker, for one thing), they do seem roughly correlated. So go forth, benchmark and tweak! 😉

P.S. If you’ve been following mobile automation, you might be asking why I don’t just suggest running Talos and Robocop on your workstation. Can’t they do the same sorts of things? The short answer is that yes, they can, but unfortunately they’re much more involved to set up and use at the moment. Arguably they shouldn’t be, and this is something we (Mozilla tools & automation) need to work on. We’ll get there eventually (and help would be welcome!). For now, hacks like this should help with getting out the first release of Fennec by providing a fast, easy to use tool for bisection and analysis.

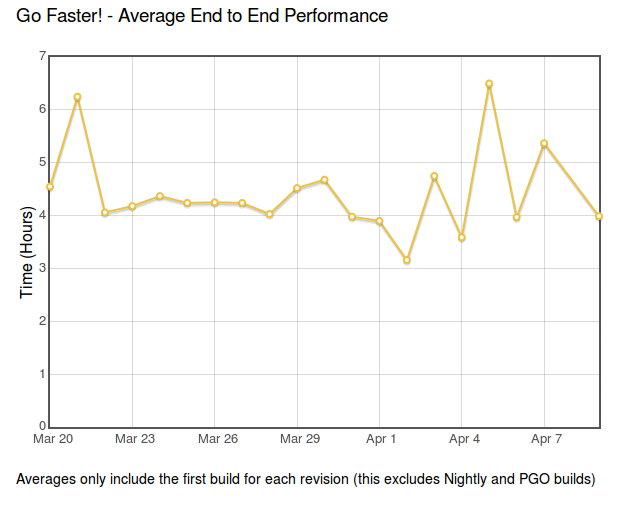

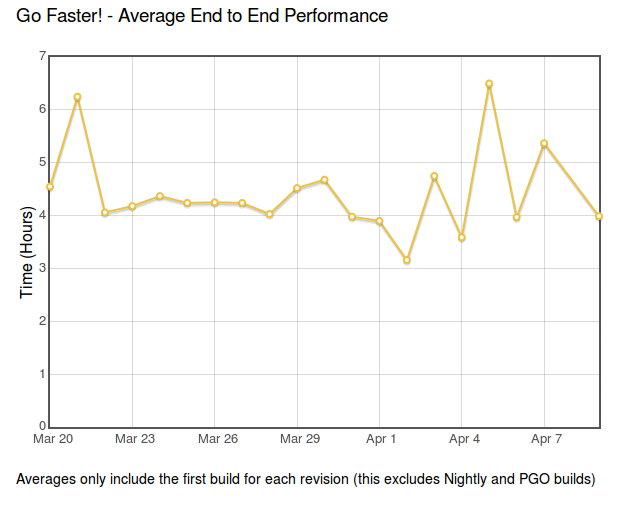

Build times for mozilla-central are a major factor in developer productivity. Faster build times mean more people using try (reducing breakage) and more fine-grained regression ranges (reducing the impact of breakages). As a side benefit, it allows us to avoid buying and maintaining more hardware (or put new hardware to better use). About a half-year ago, we set up a project called BuildFaster to try to bring these times down, setting the ambitious goal of getting build times (from checkin to tests done) down to 2 hours. We didn’t quite succeed, though we did make some major strides. As part of this project, we also developed a dashboard to track our progress and narrow down the major bottlenecks which were keeping up our build times.

Unfortunately, this dashboard went down earlier this year with the rest of Brasstacks and we hadn’t had the chance to bring it back up. I’m pleased to announce that thanks to Jonathan Griffin, it’s finally back online.

While no one is actively working on build performance at the moment (at least to my knowledge), it’s still useful to keep track of build times to make sure that we don’t regress. Anecdotally, it has seemed to me that the time needed to get results from try has been pretty stable over the last while, and this is borne out by the results:

As the cliche goes: no news is good news.

[ For more information on the Eideticker software I’m referring to, see this entry ]

Participated in an interesting meeting on checkerboarding in Firefox for Android yesterday. As a reminder, checkerboarding refers to the amount of time you spend waiting to see the full page after you do a swipe on your mobile device, and it’s a big issue right now : so much so that it puts our delivery goal for the new native browser at risk.

It seems like we have a number of strategies for improving performance which will likely solve the problem, but we need to be able to measure improvements to make sure that we’re making progress. This is one of the places where Eideticker could be useful (especially with regards to measuring us against the competition), though there are a few things that we need to add before it’s going to be as useful as it could be. The most urgent, as I understand, is to come up with a suite of tests which accurately represent the set of pages that we’re having issues with. The current main measure of checkerboarding that we’re using with eideticker is taskjs.org which, while an interesting test case in some ways, doesn’t accurately represent the sort of site that the user would normally go to in the wild (and thus be annoyed by). 😉

This is going to take a few days (and a lot of review: I’m definitely no expert when it comes to this stuff) to get right, but I just added two tests for the New York Times which I think are a step in the right direction of being more representative of real-world use cases. Have a look here:

http://wrla.ch/eideticker/dashboard/#/nytimes-scrolling

http://wrla.ch/eideticker/dashboard/#/nytimes-zooming

The results here actually aren’t as bad as I would have expected/remembered. There amount of checkerboarding after a zoom out is a bit annoying (I understand this a known issue with font caching, or something) but not too terrible. Still, any improvements that show up here will probably apply across a wide variety of sites, as the design patterns on the New York Times site are very common.

(P.S. yes, I know I promised a comparison with Google Chrome for Android last time: rest assured that’s still coming soon!)

Just thought I’d mention this because I found it handy.

A while back AaronMT wrote up some clever instructions on taking Android screenshots by dumping the contents of ‘/dev/fb0’ and running ffmpeg on the results. This is useful, but you need to know the resolution of the device you have connected to pass the right arguments to ffmpeg. Wouldn’t it be better if you had just one script that would work for whatever device you had plugged in?

In fact, there is a way to do this using the monkeyrunner utility. Intended mainly as a tool for synthesizing input on Android (more on that some other time), you can also easily get a capture of the Android screen with its python/jython API (assuming you have the Android SDK installed). Here’s a quick script which does the job:

from com.android.monkeyrunner import MonkeyRunner, MonkeyDevice

import os

import sys

if len(sys.argv) != 2:

print "Usage: %s <filename>" % os.path.basename(sys.argv[0])

sys.exit(1)

device = MonkeyRunner.waitForConnection()

result = device.takeSnapshot()

result.writeToFile(sys.argv[1], 'png')

Copy that into a file called capture.py (or whatever), then run it like so:

<br /> monkeyrunner capture.py screenshot.png<br />

And you’re off to the races! Nice screenshot, no utilities or non-essential command line arguments required!

(credit to this stackoverflow answer for the idea)

[ For more information on the Eideticker software I’m referring to, see this entry ]

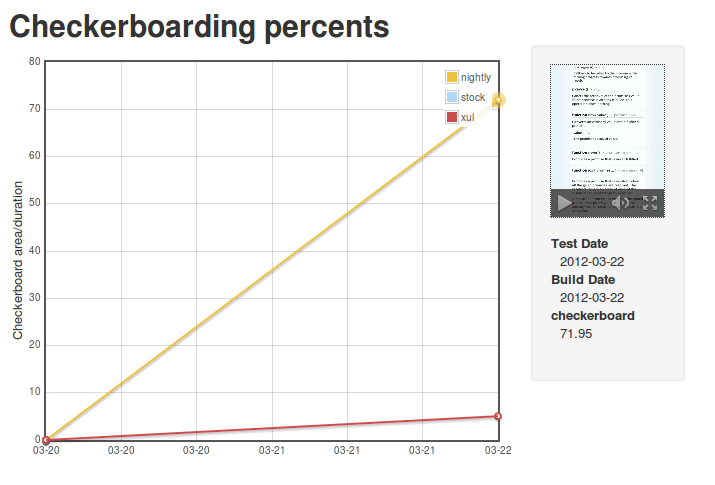

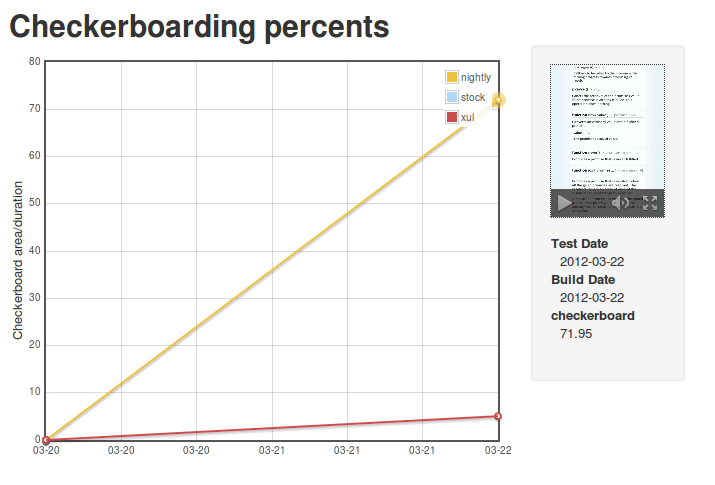

Since my first Eideticker dashboard post was so well received, I thought I’d give a quick update on another metric that I just brought online: checkerboarding (a.k.a. the amount of time you spend waiting to see the full page after you do a swipe on your mobile device).

[ link to real thing ]

Unfortunately the news here is not as good as before: as the numbers indicate, the new Native Fennec currently performs substantially worse than the version in Android market. This is a known issue, and is currently being tracked in bug 719447.

Next up: Seeing how we do against Google Chrome for Android.

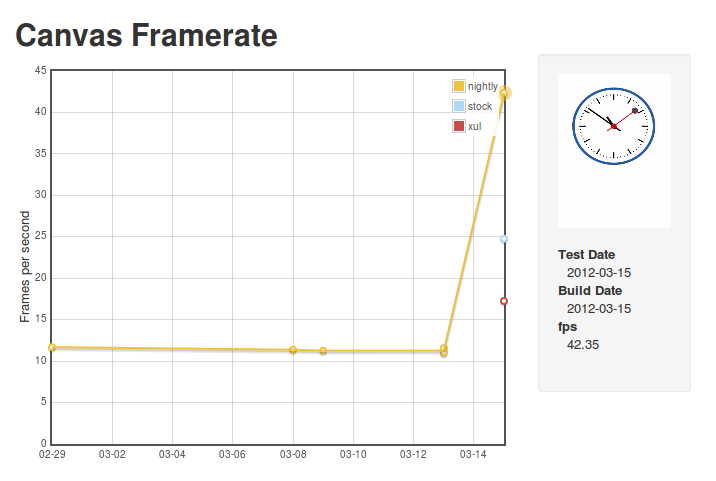

Over the last while, Clint Talbert and I have been working on setting up automatic mobile performance tests using Eideticker (a framework to measure perceived Firefox performance by video capturing automated browser interactions: for more information, see my earlier post).

There’s many reasons why this is interesting, but probably the most important one is that it can measure differences reliably across different types of mobile browsers. Currently I’m testing the old XUL fennec, the Android stock browser, and the latest nightlies.

I’m pleased to announce that the first iteration of the dashboard is available for public consumption, on my site.

http://wrla.ch/eideticker/dashboard/#/canvas

The demo is pretty cheesey (just click on any of the datapoints to see the video capture), but nonetheless does seem to illustrate some interesting differences between the three browsers. The big jump in performance for nightly comes from the landing of the Maple branch, which happened earlier this week. Hopefully this validates some of the work that the mobile/graphics team has been doing over the past while. Exciting times!

For the last few days I’ve been experimenting with getting a Pandaboard running Android 4.0, continuing the work that Clint Talbert started in the fall to get these boards for use as a replacement for the Tegra in Mozilla’s android automation. The first objective is to get a reproducible build going, after that we’ll try to get some of our custom tools (SUTAgent & friends) installed by default.

So far this has been interesting. Much as Clint did before, I thought I’d document some of the notes on what I did in the hopes that they’ll be helpful to other people trying to do similar things.

Getting things up and running is a two step process. First, you build the beast. This part is straightforward, just follow the instructions here:

At least the build part is more or less straightforward. Just follow the instructions here:

Note that you almost certainly want to build in the “eng” configuration, which is rooted and (apparently) has some extra tools installed.

Installing it is a little more tricky. The way they want you to do this is put the pandaboard into a special mode and copy the stuff you built onto an sdcard. Seem a little funny to you? Yeah, it does to me too. Why not just build an sdcard image directly?

Nonetheless, this is the officially supported way of imaging a pandaboard, so let’s just follow it until we can think of a better way of doing things. The instructions for doing this on the pandaboard are located in the source tree here:

<a href="http://source-android.frandroid.com/device/ti/panda/README">device/ti/panda/README</a>

These are mostly correct as far as I can tell, but there’s a few gotchas. First, you need to run the commands mentioned as root unless you’ve configured USB to be configurable by your user. Second, most of those commands are not in the path by default so you’ll need to specify the full path to e.g. the fastboot utility. The instructions here cover these exception cases: I recommend following them instead.

One thing which neither document mentions is that you really need to make sure your sdcard is wiped completely clean before using fastboot. The “oem format” step only recreates the partition table, it doesn’t delete any corrupted partitions. If you reboot while these are still in place, it will try to bring up your corrupted version of Android, not the fastboot console. I spent quite some time debugging why I couldn’t properly flash the operating system before realizing this. Easiest way to get around this is to dd /dev/zero onto the sdcard before beginning the flashing process.

Also, while not strictly necessary to get something up and running, I recommend highly getting an HDMI monitor as well as a serialUSB adapter. The former is useful to see if your Android device actually successfully booted up, the latter is useful for debugging boot issues where you don’t get that far (the serial console is always available from boot).

So, after painfully learning about the above caveats, I have managed to get things mostly working. I can see the ICS homescreen on my attached HDMI monitor and interact with it if I attach a USB mouse. The one gotcha is that both ethernet and WIFI networking are totally broken. Plugging in an ethernet cable or connecting to a WIFI network seems to result in the machine randomly rebooting, with the logs saying nothing useful. Both of these things are ostensibly supposed to be working according to the latest I’ve read from Google so I’m not exactly sure what’s going on. Investigations will continue.

A few years ago, I fancied the idea of creating my own software consulting business. For some reason, I thought “Masala Labs” would be a cool and witty name for it (“masala” means spice mixture in Hindi, and who doesn’t like spicy things?), so I registered the domain along with the associated small business paperwork in Nova Scotia. I only ever had one client, and it was only a few months before I wound up joining a few of my colleagues in founding “The Navarra Group” (which is also now defunct, a story I will perhaps tell at another time), so it never got very huge.

Anyway, I still had my own domain, and I wanted to move my blog from livejournal, which was beginning to jump the shark. The masalalabs domain was mine, so why not use it? I created a wordpress blog, pointed my DNS registration at it, and started writing. This worked well for a while (names don’t really matter for random personal blogs), but lately I’ve been wanting to start a new project and “Masala Labs” doesn’t exactly seem like a winning banner for me to put it under.

So I’ve decided it’s time for a bit of a change. I just registered the swiss domain name ‘wrla.ch’, created a fancy new landing page, and made this blog its first “sub site”. More to come.

So, for all 20 of you who are subscribed to my doings, please update your links. For those of you using Google Reader / RSS, the new feed is at this URL. All the old masalalabs links should at least work until the domain expires in a few years, so there’s no huge rush. Then again, you’ll probably forget if you don’t do it now (and thus be deprived of my endless ravings about checkerboarding on mobile Fennec on Android), so why wait?

I’ve been spending a bit more time on refining the checkerboarding tests in Eideticker that I talked about last time. Most of my work has been focused on making the results as representative of a real world scenario as possible, to that effect I’ve been working on:

- Changed the test case from a web site of my own concoction to a more realistic example (the taskjs.org site)

- Use actual Android native events (via MonkeyRunner) to synthesize touch-based scrolling instead of simulating the event in JavaScript (which exercises a completely different codepath).

- Fixing various synchronization issues to make results more repeatable. Before captures were of wildly variable lengths, which made the numbers extremely suspect. There’s probably still a few issues, but much less than before.

The end result of this is a framework that gives much more meaningful results. The bad news is that the results that I’m measuring don’t show a very positive picture for where we’re at with the native re-write of Firefox. Even relative to the version of mobile Firefox which is currently on the Android Market, we still have some catching up to do. Here’s some video of the “old” firefox in action:

And here’s the Native fennec (what we’re currently offering in nightly, with some minor modifications by me to change the way the “checkerboard” is drawn for analysis purposes):

The numbers behind this comparison:

| Platform |

Percent checkerboarding over run of test |

| Old Fennec |

2% |

| Native Fennec |

57% |

(by the way, this performance regression is filed as bug 719447)

I know there’s lots of great effort going into improving this situation, so I have hope that we’ll be doing much better on this metric in the coming days/weeks. The process for creating these videos/analyses is mostly automated at this point, so my plan is to create a small dashboard (ala arewefastyet.com) to measure these numbers over time on the latest nightlies. Stay tuned!

After my post on measuring checkerboarding in mobile Firefox, Clint Talbert (my fearless manager) suggested I run a before and after test to measure the improvement that just landed as part of bug 709512. After a bit of cleanup, I did so, measuring the delta between my build on December 20th and the latest version of Aurora. The difference is pretty remarkable: at least on the LG G2X that I’ve been using for testing, we’ve gone from checkerboarding between 10–20% of the time and not checkerboarding almost at all (in between two runs of the test with the Aurora build, there is exactly one frame that checkerboards). All credit to Chris Lord for that!

See the video evidence for yourself. Before:

After: