Showing posts tagged Mozilla

[ originally posted on mozilla.dev.platform ]

Hello from Platform Engineering Operations! Once a month we highlight one of our projects to help the Mozilla community discover a useful tool or an interesting contribution opportunity.

This month’s project is Perfherder!

What is Perfherder?

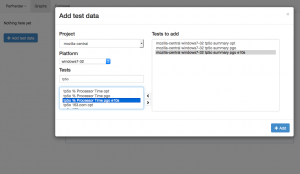

Perfherder is a generic system for visualizing and analyzing performance data produced by the many automated tests we run here at Mozilla (such as Talos, “Are we fast yet?” or “Are we slim yet?”). The chief goal of the project is to make sure that performance of Firefox gets better, not worse over time. It does this by:

- Tracking the performance generated by our automated tests, allowing them to be visualized on a graph.

- Providing a sheriffing dashboard which allows for incoming alerts of performance regressions to be annotated and triaged - bugs can be filed based on a template and their resolution status can be tracked.

In addition to its own user interface, Perfherder also provides an API on the backend that other people can use to build custom performance visualizations and dashboards. For example, the metrics group has been working on a set of release quality indices for performance based on Perfherder data:

https://metrics.mozilla.com/quality-indices/

How it works

Perfherder is part of Treeherder, building on that project’s existing support for tracking revision and test job information. Like the rest of Treeherder, Perfherder’s backend is written in Python, using the Django web framework. The user interface is written as an AngularJS application.

Learning more

For more information on Perfherder than you ever wanted to know, please see the wiki page:

https://wiki.mozilla.org/EngineeringProductivity/Projects/Perfherder

Can I contribute?

Yes! We have had some fantastic contributions from the community to Perfherder, and are always looking for more. This is a great way to help developers make Firefox faster (or use less memory). The core of Perfherder is relatively small, so this is a great chance to learn either Django or Angular if you have a small amount of Python and/or JavaScript experience.

We have set aside a set of bugs that are suitable for getting started here:

https://bugzilla.mozilla.org/buglist.cgi?list_id=12722722&resolution=---&status_whiteboard_type=allwordssubstr&query_format=advanced&status_whiteboard=perfherder-starter-bug

For more information on contributing to Perfherder, please see the contribution section of the above wiki page:

https://wiki.mozilla.org/EngineeringProductivity/Projects/Perfherder#Contribution

It’s a small thing, but I submitted a patch to trychooser last week which adds a tooltip indicating the actual Talos tests that are run as part of the various jobs that you can schedule as part of a try push. It’s in production as of now:

Previously, the only way to do this was to dig into the actual buildbot code, which was more than a little annoying.

If you think your patch might have a good chance of regressing performance, please do run the Talos tests before you check in. It’s much less work for all of us when these things are caught before integration and back outs are no fun for anyone. We really need better documentation for this stuff, but meanwhile if you need help with this, please ask in the #perf channel on irc.mozilla.org

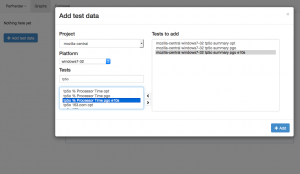

In addition to the database refactoring I mentioned a few weeks ago, some cool stuff has been going into Perfherder lately.

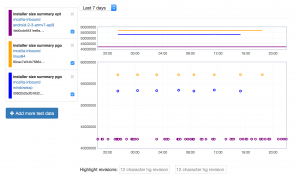

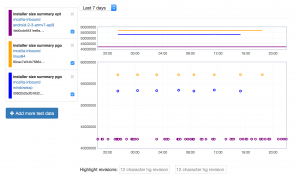

Tracking installer size

Perfherder is now tracking the size of the Firefox installer for the various platforms we support (bug 1149164). I originally only intended to track Android .APK size (on request from the mobile team), but installer sizes for other platforms came along for the ride. I don’t think anyone will complain.

link

Just as exciting to me as the feature itself is how it’s implemented: I added a log parser to treeherder which just picks up a line called “PERFHERDER_DATA” in the logs with specially formatted JSON data, and then automatically stores whatever metrics are in there in the database (platform, options, etc. are automatically determined). For example, on Linux:

PERFHERDER_DATA: {"framework": {"name": "build_metrics"}, "suites": [{"subtests": [{"name": "libxul.so", "value": 99030741}], "name": "installer size", "value": 55555785}]}

This should make it super easy for people to add their own metrics to Perfherder for build and test jobs. We’ll have to be somewhat careful about how we do this (we don’t want to add thousands of new series with irrelevant / inconsistent data) but I think there’s lots of potential here to be able to track things we care about on a per-commit basis. Maybe build times (?).

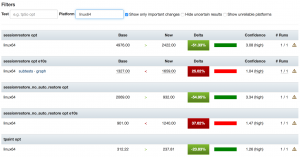

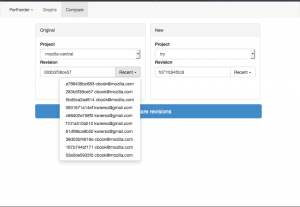

More compare view improvements

I added filtering to the Perfherder compare view and added back links to the graphs view. Filtering should make it easier to highlight particular problematic tests in bug reports, etc. The graphs links shouldn’t really be necessary, but unfortunately are due to the unreliability of our data — sometimes you can only see if a particular difference between two revisions is worth paying attention to in the context of the numbers over the last several weeks.

Miscellaneous

Even after the summer of contribution has ended, Mike Ling continues to do great work. Looking at the commit log over the past few weeks, he’s been responsible for the following fixes and improvements:

- Bug 1218825: Can zoom in on perfherder graphs by selecting the main view

- Bug 1207309: Disable ‘<’ button in test chooser if no test selected

- Bug 1210503 : Include non-summary tests in main comparison view

- Bug 1153956 : Persist the selected revision in the url on perfherder (based on earlier work by Akhilesh Pillai)

Next up

My main goal for this quarter is to create a fully functional interface for actually sheriffing performance regressions, to replace alertmanager. Work on this has been going well. More soon.

I spent a good chunk of time last quarter redesigning how Perfherder stores its data internally. Here are some notes on this change, for posterity.

Perfherder’s data model is based around two concepts:

- Series signatures: A unique set of properties (platform, test name, suite name, options) that identifies a performance test.

- Series data: A set of measurements for a series signature, indexed by treeherder push and job information.

When it was first written, Perfherder stored the second type of data as a JSON-encoded series in a relational (MySQL) database. That is, instead of storing each datum as a row in the database, we would store sequences of them. The assumption was that for the common case (getting a bunch of data to plot on a graph), this would be faster than fetching a bunch of rows and then encoding them as JSON. Unfortunately this wasn’t really true, and it had some serious drawbacks besides.

First, the approach’s performance was awful when it came time to add new data. To avoid needing to decode or download the full stored series when you wanted to render only a small subset of it, we stored the same series multiple times over various time intervals. For example, we stored the series data for one day, one week: all the way up to one year. You can probably see the problem already: you have to decode and re-encode the same data structure many times for each time interval for every new performance datum you were inserting into the database. The pseudo code looked something like this for each push:

for each platform we're testing talos on:

for each talos job for the platform:

for each test suite in the talos job:

for each subtest in the test suite:

for each time interval in one year, 90 days, 60 days, ...:

fetch and decode json series for that time interval from db

add datapoint to end of series

re-encode series as json and store in db

Consider that we have some 6 platforms (android, linux64, osx, winxp, win7, win8), 20ish test suites with potentially dozens of subtests and you can see where the problems begin.

In addition to being slow to write, this was also a pig in terms of disk space consumption. The overhead of JSON ("{, }" characters, object properties) really starts to add up when you’re storing millions of performance measurements. We got around this (sort of) by gzipping the contents of these series, but that still left us with gigantic mysql replay logs as we stored the complete “transaction” of replacing each of these series rows thousands of times per day. At one point, we completely ran out of disk space on the treeherder staging instance due to this issue.

Read performance was also often terrible for many common use cases. The original assumption I mentioned above was wrong: rendering points on a graph is only one use case a system like Perfherder has to handle. We also want to be able to get the set of series values associated with two result sets (to render comparison views) or to look up the data associated with a particular job. We were essentially indexing the performance data only on one single dimension (time) which made these other types of operations unnecessarily complex and slow — especially as the data you want to look up ages. For example, to look up a two week old comparison between two pushes, you’d also have to fetch the data for every subsequent push. That’s a lot of unnecessary overhead when you’re rendering a comparison view with 100 or so different performance tests:

So what’s the alternative? It’s actually the most obvious thing: just encode one database row per performance series value and create indexes on each of the properties that we might want to search on (repository, timestamp, job id, push id). Yes, this is a lot of rows (the new database stands at 48 million rows of performance data, and counting) but you know what? MySQL is designed to handle that sort of load. The current performance data table looks like this:

+----------------+------------------+

| Field | Type |

+----------------+------------------+

| id | int(11) |

| job_id | int(10) unsigned |

| result_set_id | int(10) unsigned |

| value | double |

| push_timestamp | datetime(6) |

| repository_id | int(11) |

| signature_id | int(11) |

+----------------+------------------+

MySQL can store each of these structures very efficiently, I haven’t done the exact calculations, but this is well under 50 bytes per row. Including indexes, the complete set of performance data going back to last year clocks in at 15 gigs. Not bad. And we can examine this data structure across any combination of dimensions we like (push, job, timestamp, repository) making common queries to perfherder very fast.

What about the initial assumption, that it would be faster to get a series out of the database if it’s already pre-encoded? Nope, not really. If you have a good index and you’re only fetching the data you need, the overhead of encoding a bunch of database rows to JSON is pretty minor. From my (remote) location in Toronto, I can fetch 30 days of tcheck2 data in 250 ms. Almost certainly most of that is network latency. If the original implementation was faster, it’s not by a significant amount.

Lesson: Sometimes using ancient technologies (SQL) in the most obvious way is the right thing to do. DoTheSimplestThingThatCouldPossiblyWork

A few months ago, Joel Maher announced the Perfherder summer of contribution. We wrapped things up there a few weeks ago, so I guess it’s about time I wrote up a bit about how things went.

As a reminder, the idea of summer of contribution was to give a set of contributors the opportunity to make a substantial contribution to a project we were working on (in this case, the Perfherder performance sheriffing system). We would ask that they sign up to do 5–10 hours of work a week for at least 8 weeks. In return, Joel and myself would make ourselves available as mentors to answer questions about the project whenever they ran into trouble.

To get things rolling, I split off a bunch of work that we felt would be reasonable to do by a contributor into bugs of varying difficulty levels (assigning them the bugzilla whiteboard tag ateam-summer-of-contribution). When someone first expressed interest in working on the project, I’d assign them a relatively easy front end one, just to cover the basics of working with the project (checking out code, making a change, submitting a PR to github). If they made it through that, I’d go on to assign them slightly harder or more complex tasks which dealt with other parts of the codebase, the nature of which depended on what they wanted to learn more about. Perfherder essentially has two components: a data storage and analysis backend written in Python and Django, and a web-based frontend written in JS and Angular. There was (still is) lots to do on both, which gave contributors lots of choice.

This system worked pretty well for attracting people. I think we got at least 5 people interested and contributing useful patches within the first couple of weeks. In general I think onboarding went well. Having good documentation for Perfherder / Treeherder on the wiki certainly helped. We had lots of the usual problems getting people familiar with git and submitting proper pull requests: we use a somewhat clumsy combination of bugzilla and github to manage treeherder issues (we “attach” PRs to bugs as plaintext), which can be a bit offputting to newcomers. But once they got past these issues, things went relatively smoothly.

A few weeks in, I set up a fortnightly skype call for people to join and update status and ask questions. This proved to be quite useful: it let me and Joel articulate the higher-level vision for the project to people (which can be difficult to summarize in text) but more importantly it was also a great opportunity for people to ask questions and raise concerns about the project in a free-form, high-bandwidth environment. In general I’m not a big fan of meetings (especially status report meetings) but I think these were pretty useful. Being able to hear someone else’s voice definitely goes a long way to establishing trust that you just can’t get in the same way over email and irc.

I think our biggest challenge was retention. Due to (understandable) time commitments and constraints only one person (Mike Ling) was really able to stick with it until the end. Still, I’m pretty happy with that success rate: if you stop and think about it, even a 10-hour a week time investment is a fair bit to ask. Some of the people who didn’t quite make it were quite awesome, I hope they come back some day.

—

On that note, a special thanks to Mike Ling for sticking with us this long (he’s still around and doing useful things long after the program ended). He’s done some really fantastic work inside Perfherder and the project is much better for it. I think my two favorite features that he wrote up are the improved test chooser which I talked about a few months ago and a get related platform / branch feature which is a big time saver when trying to determine when a performance regression was first introduced.

I took the time to do a short email interview with him last week. Here’s what he had to say:

- Tell us a little bit about yourself. Where do you live? What is it you do when not contributing to Perfherder?

I’m a postgraduate student of NanChang HangKong university in China whose major is Internet of things. Actually,there are a lot of things I would like to do when I am AFK, play basketball, video game, reading books and listening music, just name it ; )

- How did you find out about the ateam summer of contribution program?

well, I remember when I still a new comer of treeherder, I totally don’t know how to start my contribution. So, I just go to treeherder irc and ask for advice. As I recall, emorley and jfrench talk with me and give me a lot of hits. Then Will (wlach) send me an Email about ateam summer of contribution and perfherder. He told me it’s a good opportunity to learn more about treeherder and how to work like a team! I almost jump out of bed (I receive that email just before get asleep) and reply with YES. Thank you Will!

- What did you find most challenging in the summer of contribution?

I think the most challenging thing is I not only need to know how to code but also need to know how treeherder actually work. It’s a awesome project and there are a ton of things I haven’t heard before (i.e T-test, regression). So I still have a long way to go before I familiar with it.

- What advice would give you to future ateam contributors?

The only thing you need to do is bring your question to irc and ask. Do not hesitate to ask for help if you need it! All the people in here are nice and willing to help. Enjoy it!

Since my last update, we’ve been trucking along with improvements to Perfherder, the project for making Firefox performance sheriffing and analysis easier.

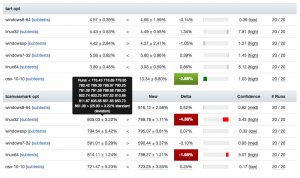

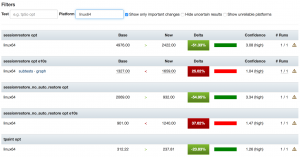

Compare visualization improvements

I’ve been spending quite a bit of time trying to fix up the display of information in the compare view, to address feedback from developers and hopefully generally streamline things. Vladan (from the perf team) referred me to Blake Winton, who provided tons of awesome suggestions on how to present things more concisely.

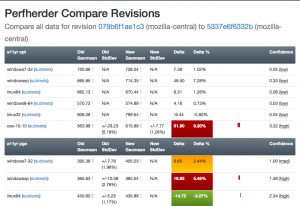

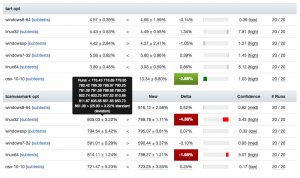

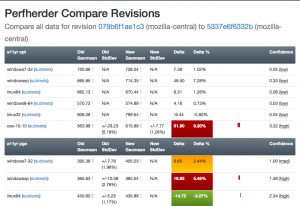

Here’s an old versus new picture:

Summary of significant changes in this view:

- Removed or consolidated several types of numerical information which were overwhelming or confusing (e.g. presenting both numerical and percentage standard deviation in their own columns).

- Added tooltips all over the place to explain what’s being displayed.

- Highlight more strongly when it appears there aren’t enough runs to make a definitive determination on whether there was a regression or improvement.

- Improve display of visual indicator of magnitude of regression/improvement (providing a pseudo-scale showing where the change ranges from 0% : 20%+).

- Provide more detail on the two changesets being compared in the header and make it easier to retrigger them (thanks to Mike Ling).

- Much better and more intuitive error handling when something goes wrong (also thanks to Mike Ling).

The point of these changes isn’t necessarily to make everything “immediately obvious” to people. We’re not building general purpose software here: Perfherder will always be a rather specialized tool which presumes significant domain knowledge on the part of the people using it. However, even for our audience, it turns out that there’s a lot of room to improve how our presentation: reducing the amount of extraneous noise helps people zero in on the things they really need to care about.

Special thanks to everyone who took time out of their schedules to provide so much good feedback, in particular Avi Halmachi, Glandium, and Joel Maher.

Of course more suggestions are always welcome. Please give it a try and file bugs against the perfherder component if you find anything you’d like to see changed or improved.

Getting the word out

Hammersmith:mozilla-central wlach$ hg push -f try

pushing to ssh://hg.mozilla.org/try

no revisions specified to push; using . to avoid pushing multiple heads

searching for changes

remote: waiting for lock on repository /repo/hg/mozilla/try held by 'hgssh1.dmz.scl3.mozilla.com:8270'

remote: got lock after 4 seconds

remote: adding changesets

remote: adding manifests

remote: adding file changes

remote: added 1 changesets with 1 changes to 1 files

remote: Trying to insert into pushlog.

remote: Inserted into the pushlog db successfully.

remote:

remote: View your change here:

remote: https://hg.mozilla.org/try/rev/e0aa56fb4ace

remote:

remote: Follow the progress of your build on Treeherder:

remote: https://treeherder.mozilla.org/#/jobs?repo=try&revision=e0aa56fb4ace

remote:

remote: It looks like this try push has talos jobs. Compare performance against a baseline revision:

remote: https://treeherder.mozilla.org/perf.html#/comparechooser?newProject=try&newRevision=e0aa56fb4ace

Try pushes incorporating Talos jobs now automatically link to perfherder’s compare view, both in the output from mercurial and in the emails the system sends. One of the challenges we’ve been facing up to this point is just letting developers know that Perfherder exists and it can help them either avoid or resolve performance regressions. I believe this will help.

Data quality and ingestion improvements

Over the past couple weeks, we’ve been comparing our regression detection code when run against Graphserver data to Perfherder data. In doing so, we discovered that we’ve sometimes been using the wrong algorithm (geometric mean) to summarize some of our tests, leading to unexpected and less meaningful results. For example, the v8_7 benchmark uses a custom weighting algorithm for its score, to account for the fact that the things it tests have a particular range of expected values.

To hopefully prevent this from happening again in the future, we’ve decided to move the test summarization code out of Perfherder back into Talos (bug 1184966). This has the additional benefit of creating a stronger connection between the content of the Talos logs and what Perfherder displays in its comparison and graph views, which has thrown people off in the past.

Continuing data challenges

Having better tools for visualizing this stuff is great, but it also highlights some continuing problems we’ve had with data quality. It turns out that our automation setup often produces qualitatively different performance results for the exact same set of data, depending on when and how the tests are run.

A certain amount of random noise is always expected when running performance tests. As much as we might try to make them uniform, our testing machines and environments are just not 100% identical. That we expect and can deal with: our standard approach is just to retrigger runs, to make sure we get a representative sample of data from our population of machines.

The problem comes when there’s a pattern to the noise: we’ve already noticed that tests run on the weekends produce different results (see Joel’s post from a year ago, “A case of the weekends”) but it seems as if there’s other circumstances where one set of results will be different from another, depending on the time that each set of tests was run. Some tests and platforms (e.g. the a11yr suite, MacOS X 10.10) seem particularly susceptible to this issue.

We need to find better ways of dealing with this problem, as it can result in a lot of wasted time and energy, for both sheriffs and developers. See for example bug 1190877, which concerned a completely spurious regression on the tresize benchmark that was initially blamed on some changes to the media code: in this case, Joel speculates that the linux64 test machines we use might have changed from under us in some way, but we really don’t know yet.

I see two approaches possible here:

- Figure out what’s causing the same machines to produce qualitatively different result distributions and address that. This is of course the ideal solution, but it requires coordination with other parts of the organization who are likely quite busy and might be hard.

- Figure out better ways of detecting and managing these sorts of case. I have noticed that the standard deviation inside the results when we have spurious regressions/improvements tends to be higher (see for example this compare view for the aforementioned “regression”). Knowing what we do, maybe there’s some statistical methods we can use to detect bad data?

For now, I’m leaning towards (2). I don’t think we’ll ever completely solve this problem and I think coming up with better approaches to understanding and managing it will pay the largest dividends. Open to other opinions of course!

Haven’t been doing enough blogging about Perfherder (our project to make Talos and other per-checkin performance data more useful) recently. Let’s fix that. We’ve been making some good progress, helped in part by a group of new contributors that joined us through an experimental "summer of contribution" program.

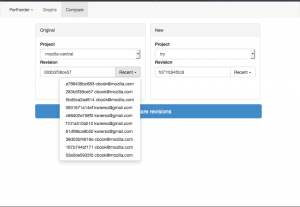

Comparison mode

Inspired by Compare Talos, we’ve designed something similar which hooks into the perfherder backend. This has already gotten some interest: see this post on dev.tree-management and this one on dev.platform. We’re working towards building something that will be really useful both for (1) illustrating that the performance regressions we detect are real and (2) helping developers figure out the impact of their changes before they land them.

|

|

Most of the initial work was done by Joel Maher with lots of review for aesthetics and correctness by me. Avi Halmachi from the Performance Team also helped out with the t-test model for detecting the confidence that we have that a difference in performance was real. Lately myself and Mike Ling (one of our summer of contribution members) have been working on further improving the interface for usability — I’m hopeful that we’ll soon have something implemented that’s broadly usable and comprehensible to the Mozilla Firefox and Platform developer community.

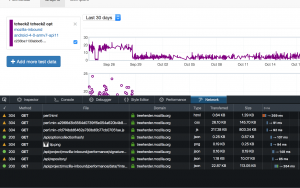

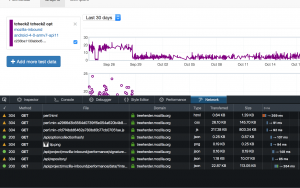

Graphs improvements

Although it’s received slightly less attention lately than the comparison view above, we’ve been making steady progress on the graphs view of performance series. Aside from demonstrations and presentations, the primary use case for this is being able to detect visually sustained changes in the result distribution for talos tests, which is often necessary to be able to confirm regressions. Notable recent changes include a much easier way of selecting tests to add to the graph from Mike Ling and more readable/parseable urls from Akhilesh Pillai (another summer of contribution participant).

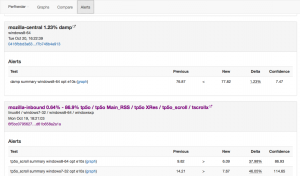

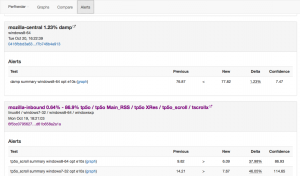

Performance alerts

I’ve also been steadily working on making Perfherder generate alerts when there is a significant discontinuity in the performance numbers, similar to what GraphServer does now. Currently we have an option to generate a static CSV file of these alerts, but the eventual plan is to insert these things into a peristent database. After that’s done, we can actually work on creating a UI inside Perfherder to replace alertmanager (which currently uses GraphServer data) and start using this thing to sheriff performance regressions — putting the herder into perfherder.

As part of this, I’ve converted the graphserver alert generation code into a standalone python library, which has already proven useful as a component in the Raptor project for FirefoxOS. Yay modularity and reusability.

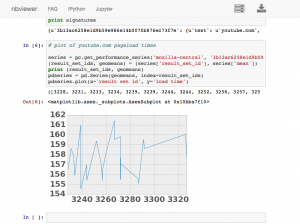

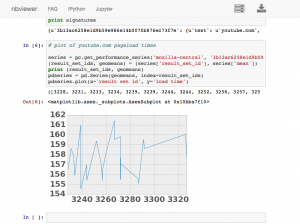

Python API

I’ve also been working on creating and improving a python API to access Treeherder data, which includes Perfherder. This lets you do interesting things, like dynamically run various types of statistical analysis on the data stored in the production instance of Perfherder (no need to ask me for a database dump or other credentials). I’ve been using this to perform validation of the data we’re storing and debug various tricky problems. For example, I found out last week that we were storing up to duplicate 200 entries in each performance series due to double data ingestion — oops.

You can also use this API to dynamically create interesting graphs and visualizations using ipython notebook, here’s a simple example of me plotting the last 7 days of youtube.com pageload data inline in a notebook:

[original]

So I went to PyCon 2015. While I didn’t leave quite as inspired as I did in 2014 (when I discovered iPython), it was a great experience and I learned a ton. Once again, I was incredibly impressed with the organization of the conference and the diversity and quality of the speakers.

Since Mozilla was nice enough to sponsor my attendance, I figured I should do another round up of notable talks that I went to.

Technical stuff that was directly relevant to what I work on:

- To ORM or not to ORM (Christine Spang): Useful talk on when using a database ORM (object relational manager) can be helpful and even faster than using a database directly. I feel like there’s a lot of misinformation and FUD on this topic, so this was refreshing to see. video slides

- Debugging hard problems (Alex Gaynor): Exactly what it says — how to figure out what’s going on when things aren’t behaving as they should. Great advice and wisdom in this one (hint: take nothing for granted, dive into the source of everything you’re using!). video slides

- Python Performance Profiling: The Guts And The Glory (Jesse Jiryu Davis): Quite an entertaining talk on how to properly profile python code. I really liked his systematic and realistic approach — which also discussed the thought process behind how to do this (hint: again it comes down to understanding what’s really going on). Unfortunately the video is truncated, but even the first few minutes are useful. video

Non-technical stuff:

- The Ethical Consequences Of Our Collective Activities (Glyph): A talk on the ethical implications of how our software is used. I feel like this is an under-discussed topic — how can we know that the results of our activity (programming) serves others and does not harm? video

- How our engineering environments are killing diversity (and how we can fix it) (Kate Heddleston): This was a great talk on how to make the environments in which we develop more welcoming to under-represented groups (women, minorities, etc.). This is something I’ve been thinking a bunch about lately, especially in the context of expanding the community of people working on our projects in Automation & Tools. The talk had some particularly useful advice (to me, anyway) on giving feedback. video slides

I probably missed out on a bunch of interesting things. If you also went to PyCon, please feel free to add links to your favorite talks in the comments!

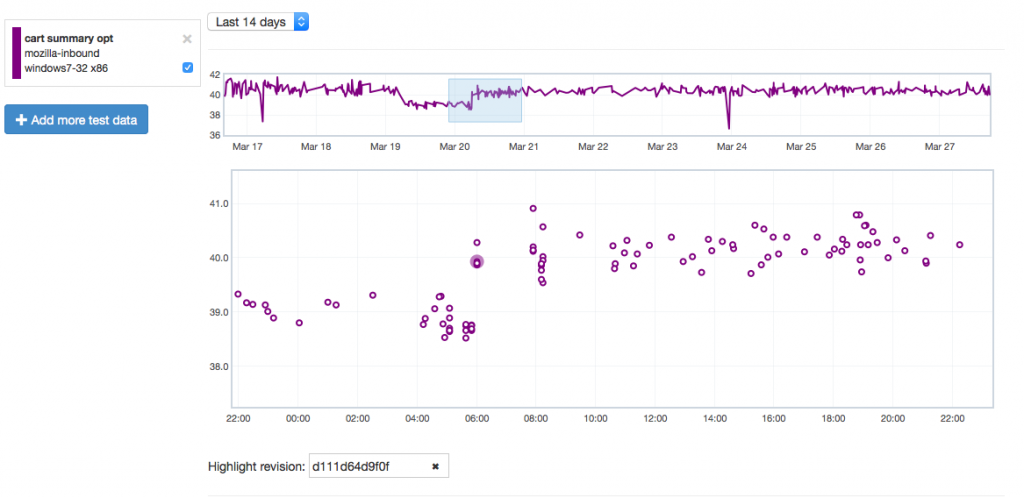

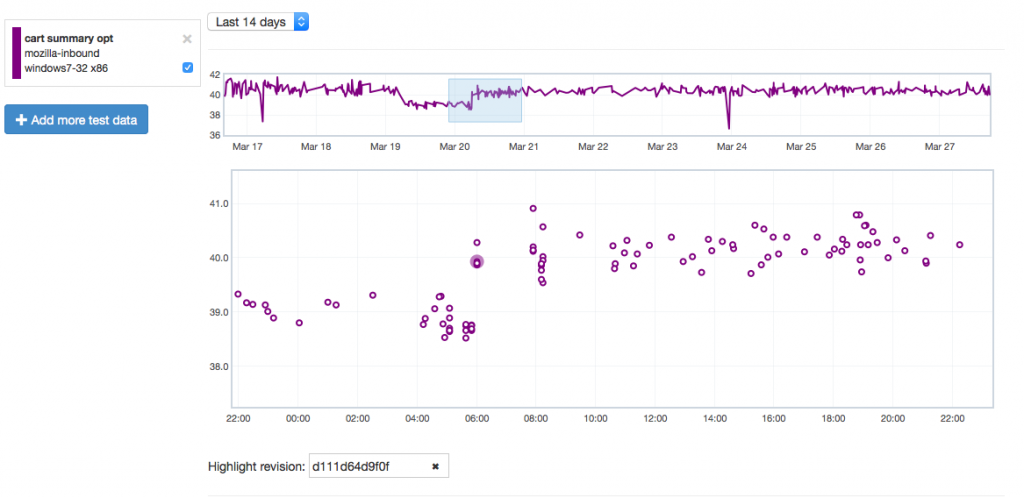

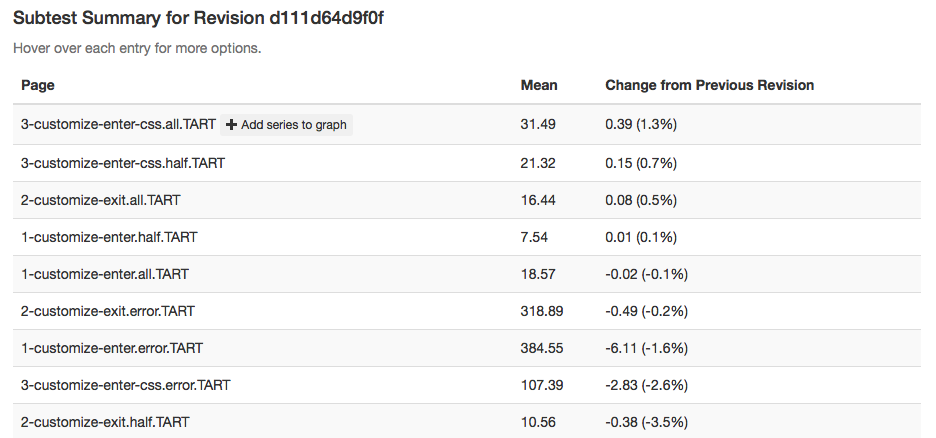

Just wanted to give another quick Perfherder update. Since the last time, I’ve added summary series (which is what GraphServer shows you), so we now have (in theory) the best of both worlds when it comes to Talos data: aggregate summaries of the various suites we run (tp5, tart, etc), with the ability to dig into individual results as needed. This kind of analysis wasn’t possible with Graphserver and I’m hopeful this will be helpful in tracking down the root causes of Talos regressions more effectively.

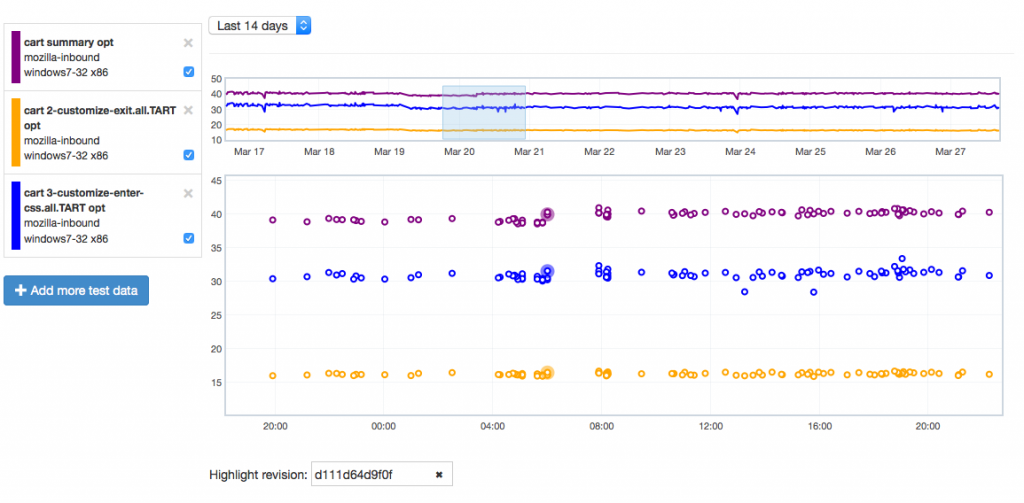

Let’s give an example of where this might be useful by showing how it can highlight problems. Recently we tracked a regression in the Customization Animation Tests (CART) suite from the commit in bug 1128354. Using Mishra Vikas‘s new “highlight revision mode” in Perfherder (combined with the revision hash when the regression was pushed to inbound), we can quickly zero in on the location of it:

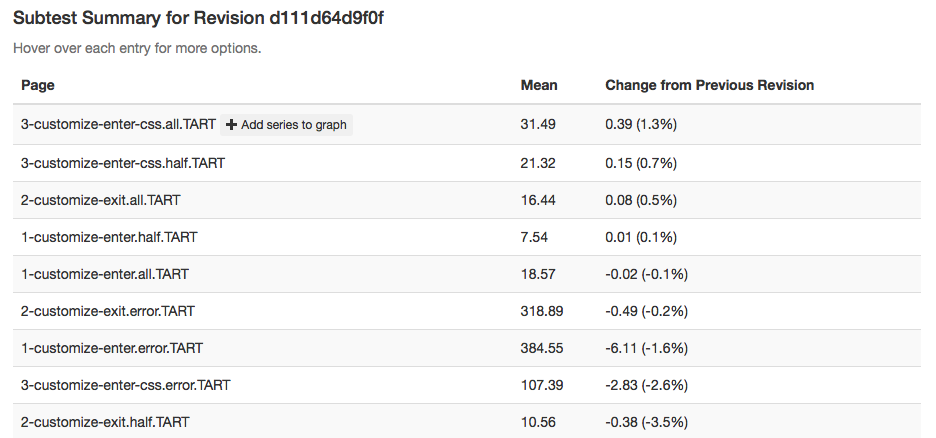

It does indeed look like things ticked up after this commit for the CART suite, but why? By clicking on the datapoint, you can open up a subtest summary view beneath the graph:

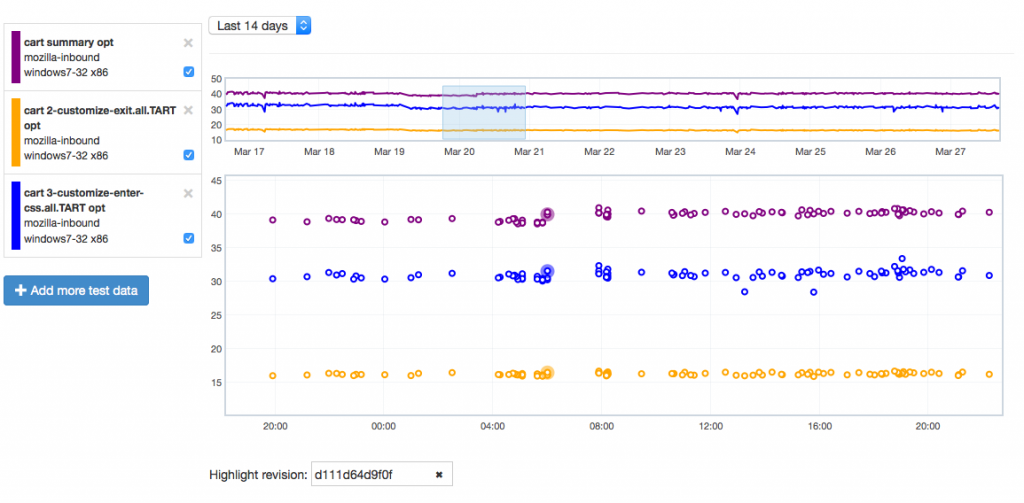

We see here that it looks like the 3-customize-enter-css.all.TART entry ticked up a bunch. The related test 3-customize-enter-css.half.TART ticked up a bit too. The changes elsewhere look minimal. But is that a trend that holds across the data over time? We can add some of the relevant subtests to the overall graph view to get a closer look:

As is hopefully obvious, this confirms that the affected subtest continues to hold its higher value while another test just bounces around more or less in the range it was before.

Hope people find this useful! If you want to play with this yourself, you can access the perfherder UI at http://treeherder.mozilla.org/perf.html.

For the past few months I’ve been working on a sub-project of Treeherder called Perfherder, which aims to provide a workflow that will let us more easily detect and manage performance regressions in our products (initially just those detected in Talos, but there’s room to expand on that later). This is a long term project and we’re still sorting out the details of exactly how it will work, but I thought I’d quickly announce a milestone.

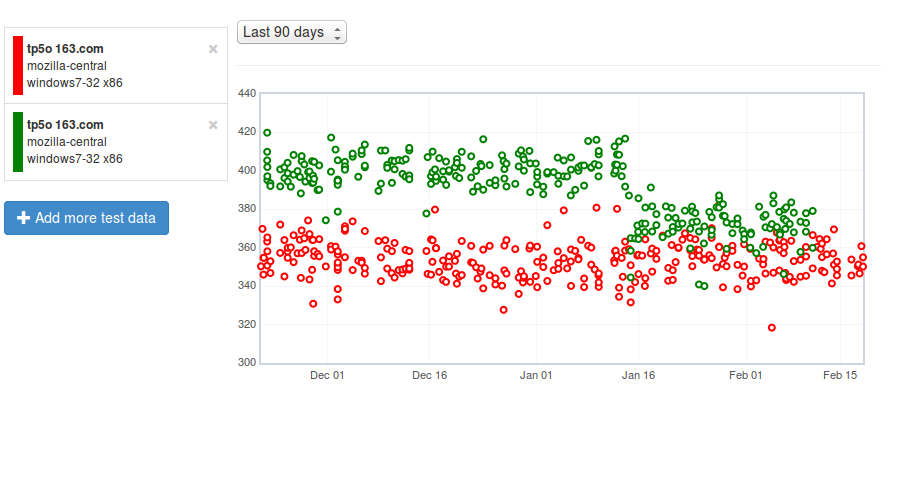

As a first step, I’ve been hacking on a graphical user interface to visualize the performance data we’re now storing inside Treeherder. It’s pretty bare bones so far, but already it has two features which graphserver doesn’t: the ability to view sub-test results (i.e. the page load time for a specific page in the tp5 suite, as opposed to the geometric mean of all of them) and the ability to see results for e10s builds.

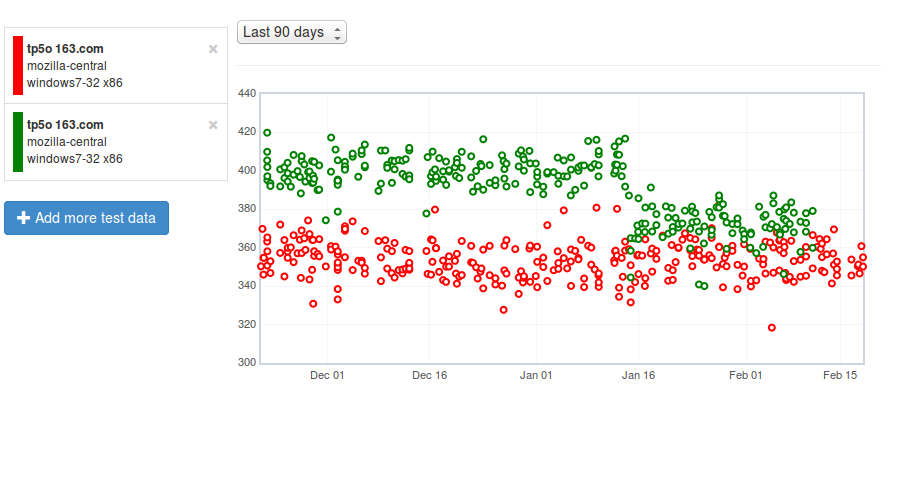

Here’s an example, comparing the tp5o 163.com page load times on windows 7 with e10s enabled (and not):

[link]

Green is e10s, red is non-e10s (the legend picture doesn’t reflect this because we have yet to deploy a fix to bug 1130554, but I promise I’m not lying). As you can see, the gap has been closing (in particular, something landed in mid-January that improved the e10s numbers quite a bit), but page load times are still measurably slower with this feature enabled.